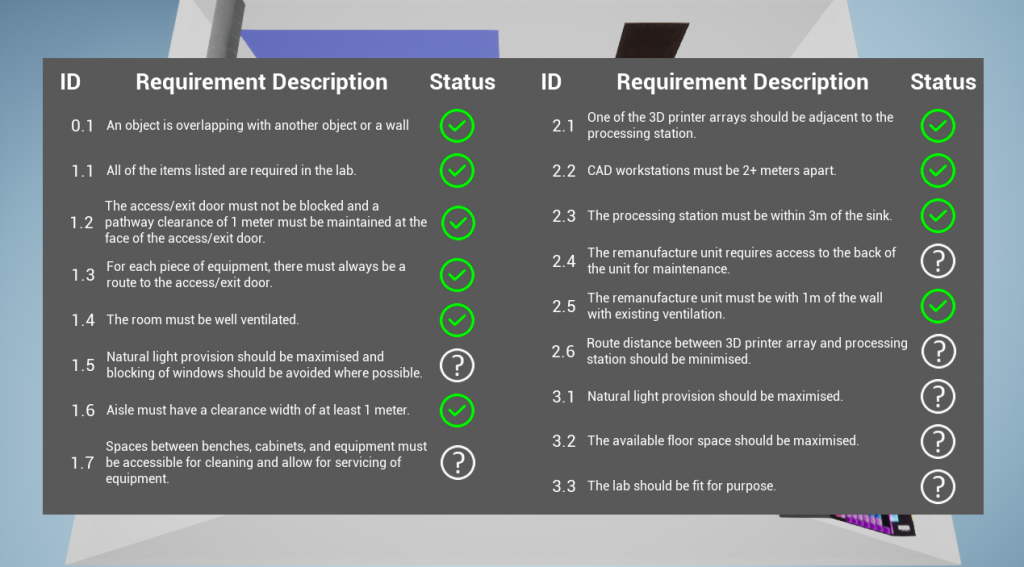

This project demonstrates the feasibility of embedding requirements into a mixed reality platform by integrating rule-based constraints, allowing users to design a space and immediately know the impact of changes as they make them.

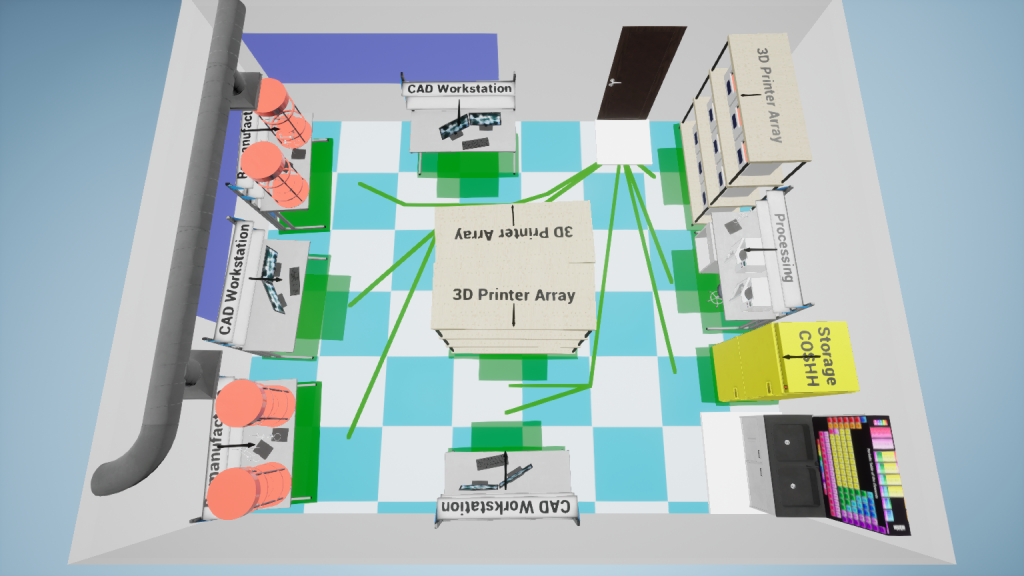

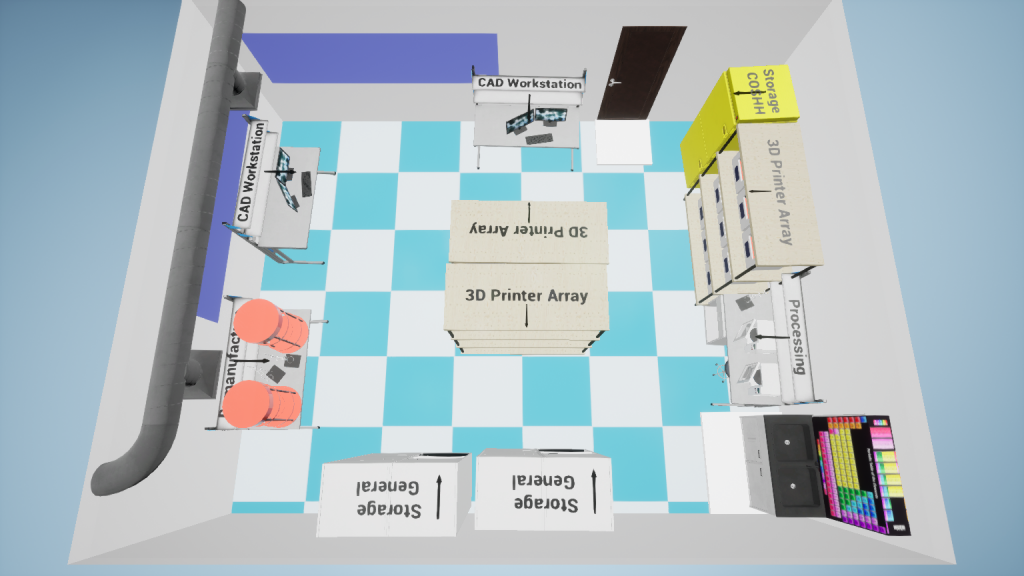

The platform, OurLab, integrates a range of mixed reality technologies and systems such as hand tracking, fiducial markers, and a tangible user interface. By moving physical, tracked objects around, a designer can explore potential lab configuration solutions that comply with given requirements. The platform analyses the design as it is occurring and reflects requirement compliance in real-time to the user.

This platform is currently being evaluated through user studies, looking at how different fidelities of visualisation immediate feedback can impact a design process, for better or worse.

OurLab is built in Unreal Engine, using a combination of C++ and Blueprints. I also wrote an integrated log writer and loader, to capture the required data for the user study.